Technical Resources

Educational Resources

APM Integrated Experience

Connect with Us

What’s the best way to find the root cause of almost any software issue? Read the logs, of course! But in a microservices architecture, this isn’t as straightforward as you may think. For example, if you have 20 different containers, which one would you check first? How do you quickly find the appropriate logs? How do you avoid wasting your time reading irrelevant logs? I’ll answer these questions, and a few others, in this post. Read on to learn how to perform log management in a microservices architecture.

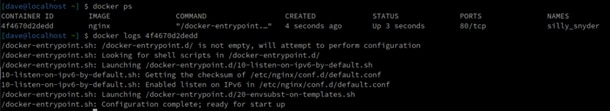

Let’s talk about the basics first. If you have an application running in a Docker container, how do you access the application’s logs? By default, Docker uses the json-file logging driver. This gives you the ability to access application logs with the docker logscommand. For example:

If you’re using Kubernetes, you can use the kubectl logs command instead.

Now, this is easy, right? It works pretty well if you only have one or two containers. If you have more, however, it starts to become a bit of a challenge to read all the logs from all the different containers. Imagine a situation where you see your application is having some trouble. You don’t know exactly what the problem could be, so you read each container’s logs, one by one, trying to find some error messages—but even when you do, it usually isn’t over. Next, you most likely need to go back to the logs of other containers and reread the logs for a specific time period when the error occurred to get a better picture. Clearly, for bigger stacks, you need a better solution.

A centralized log management solution is the best way to manage logs in a microservices architecture. Why? Simply put, it solves the problem described in the previous section. If you have a lot of containers, instead of reading their logs separately, you can read them in one place. As the name suggests, centralized logging tools help you gather the logs from all containers in a centralized place, which is important for your production environment. Remember, the more containers you have, the more time it takes to find the appropriate logs in case of issues. Of course, sometimes you know up front exactly which container is having issues. But in most cases, you don’t. Therefore, to solve issues as quickly as possible, centralized log management is crucial. In addition to simplifying the entire debugging process, it gives you other advantages as well.

When switching to centralized logging solutions, your logs are also shipped out of the container, which means you won’t lose them. If you don’t move the log data out of the container, when you delete a Docker container, you will no longer have access to its logs. With centralized logging, however, logs are sent to an external location. This helps with debugging issues. If you suddenly find out the container is gone, you can check the logs to see what happened. If your colleagues or clients report an issue with the application that happened before the weekend, for example, you’ll be able to easily find the logs, even if the container has been restarted in the meantime.

Centralized logging also helps with efficient teamwork. Imagine a few engineers trying to solve the same issue. If they all have to use docker logs or kubectl logs on their own machines, they’ll waste a lot of time doing the same job and sending log snippets to each other, trying to align. With centralized logging, they can all have the same view. Most cloud-based centralized logging solutions even allow you to easily share or mark log entries so other team members can see them in real time. Less time spent finding the same logs and aligning between engineers means more time spent on actual problem-solving.

We all hate when our systems crash because of such a trivial issue like being out of disk space, right? Well, even when monitoring systems are in place, this can happen. Applications inside the containers often save the logs to the log file. This log files live on the disks. If you’re unlucky and your application starts writing an enormous amount of event data (due to an error or a drastically increased load or misconfiguration), you may run out of disk space. The more containers you have, the higher the probability of this happening. When you ship all the logs to a centralized external system, however, you only need to monitor log size in one place.

So far, we’ve talked about the benefits of centralized logging for your microservices architecture. However, there are many different ways to set up a centralized logging solution. You can build your own or use a managed service, for example. The principle is the same. In both cases, you’ll have to instruct Docker or Kubernetes to ship the logs from the containers to a centralized external destination. Your own custom solution can be useful if you already have such a solution for other logs.

A managed cloud-based solution, however, provides you with some extra functionality. Of course, it also offloads most maintenance tasks and makes installation easy. If you’re looking for a top-class solution, you should consider SolarWinds® Loggly®. Remember when I said a cloud-based solution can give you some extra functionality? Well, Loggly is the best example of this. Proactive monitoring, integration with other tools, visualization capabilities—these are only a few of the tool’s features. Now, let me explain what all of this means.

Traditional centralized monitoring solutions help you find the root cause of issues faster. Loggly takes you to the next level. It can help you solve issues even faster because it understands how components interact and identifies correlations between them. Thus, it can do most of the debugging for you automatically. Even more importantly, however, its proactive monitoring can help you avoid issues. It can detect unusual behavior, ultimately helping you eliminate issues before they affect users—a real game-changer. But that’s not all. You can integrate Loggly with SolarWinds AppOptics™—a great application performance monitoring (APM) tool—and get an ultimate all-in-one monitoring and troubleshooting solution. Read more about how AppOptics and Loggly work together here.

We all know the importance of logs. They’re usually the first place to check when issues occur. As I’ve explained in this post, finding issues in a microservices architecture is a bit more complicated. Centralized log management tools help solve the problems arising from having numerous containers. Let’s not forget the point of having logs is to solve issues. However, our ultimate goal is to avoid or eliminate issues before they actually affect users. Traditionally, we check logs after we realize there’s an issue. Tools like Loggly can change this. With its proactive monitoring and advanced log troubleshooting, Loggly helps you not only solve issues but achieve the ultimate goal: avoiding them in the first place. But don’t take my word for it—create your free account here and check out Loggly yourself.

This post was written by Dawid Ziolkowski. Dawid has 10 years of experience as a network/system engineer, has worked in DevOps, and has recently worked as a cloud-native engineer. He’s worked for an IT outsourcing company, a research institute, a telco, a hosting company, and a consultancy company, so he’s gathered a lot of knowledge from different perspectives. Nowadays, he’s helping companies move to the cloud and/or redesign their infrastructure for a more cloud-native approach.

Need more options? Don’t miss the SolarWinds Centralized Log Management