Technical Resources

Educational Resources

APM Integrated Experience

Connect with Us

Last updated: October 2024

A company’s success depends upon the performance of its infrastructure and applications. We know logs are important in troubleshooting performance and availability issues. But is troubleshooting the only purpose of logs? Of course not. Over the years, you’ve likely realized they’re important in other ways, too. For instance, logs are helpful in performance analysis. Another purpose of logs is the visualization of business dashboards. JSON logging is the best format for storing your logs, and here’s why.

Usually, we log application data to a file. But we need a better framework. If we write logs as JSON, we can easily search fields with a JSON key. Moreover, the structured format will make it easy for us to perform an application log analysis. So, to get the most out of your JSON logging, it’s important to keep some practices in mind.

JSON, also known as JavaScript Object Notation, is a file format. We use JSON to store and maintain data in a human-readable text format. The format consists of attributes and their data types stored in the form of an array. The following is an example of a JSON array.

{

"employee": {

"name": "Rob",

"age": "35",

"salary": 5600,

"City": "New York",

"married": true

}

}Log files are records generated whenever you execute a process or perform an action on an application. The log files generally have a complex text format.

JSON logging is a kind of structured logging, Meaning you’ll have your log data parsed and stored in a structured JSON format.

Do you know the problem with log files? The text data is unstructured. As a result, it’s difficult to filter and put a query on the log files for information. Wouldn’t it be nice if developers were able to filter logs based on a field? The goal of JSON logging is to solve these problems and many others.

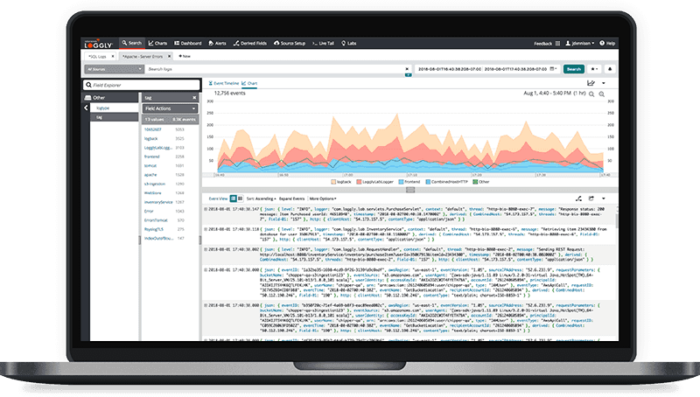

Currently, the use of artificial intelligence (AI) is increasing. AI can monitor your log files and find anomalies. But for log files to be machine-readable, they need to come in a structured format. And what could be better than making the logs structured in JSON format? JSON files are easily readable. If you’re using a log monitoring tool like SolarWinds® Loggly®, the tool will quickly load the JSON data. Let’s understand with an example. Suppose you have the following JSON.

{

"employee":

{

"name": "Rob",

"age": "35"

"salary": 5600,

"city": "New York",

"married": true

},

{

"name": "John",

"age": "43",

"salary": 4500,

"city": "Chicago",

"married": false

}

}Now, you could have data like this for hundreds of employees. How do you filter that much data? For example, if you’re using Loggly, you can use a feature called Dynamic Field Explorer. You can click on any field, like city, to see the details of the total number of cities and the employee count in each of them. Bottom line? With JSON logging, a complex search query is resolved easily with a single click.

Implementing JSON logging can be straightforward and highly beneficial for structured log data. Here are concise examples for several programming environments:

We can use the logging library with a custom formatter.

import logging, json

class JsonFormatter(logging.Formatter):

def format(self, record):

return json.dumps({"level": record.levelname, "message": record.getMessage()})

logger = logging.getLogger("json_logger")

handler = logging.StreamHandler()

handler.setFormatter(JsonFormatter())

logger.addHandler(handler)

logger.info("This is a test log message")

We can utilize Logback with a JSON encoder.

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

<version>6.6</version>

</dependency>Logback configuration (logback.xml):

<appender name="jsonConsole" class="ch.qos.logback.core.ConsoleAppender">

<encoder class="net.logstash.logback.encoder.LogstashEncoder" />

</appender>

<root level="info">

<appender-ref ref="jsonConsole" />

</root>

We can leverage the Winston library in Node.js:

const winston = require('winston');

const logger = winston.createLogger({

format: winston.format.json(),

transports: [new winston.transports.Console()]

});

logger.info('This is a test log message');

We can use the log package with custom JSON formatting:

package main

import (

"encoding/json"

"log"

"os"

"time"

)

type LogMessage struct {

Level string `json:"level"`

Time string `json:"time"`

Message string `json:"message"`

}

func main() {

log.SetFlags(0)

log.SetOutput(os.Stdout)

message := LogMessage{"INFO", time.Now().Format(time.RFC3339), "This is a test log message"}

jsonMessage, _ := json.Marshal(message)

log.Println(string(jsonMessage))

}

We can implement JSON logging with Serilog:

dotnet add package Serilog.AspNetCore

dotnet add package Serilog.Sinks.Console

Configuring in Program.cs:

using Serilog;

public class Program

{

public static void Main(string[] args)

{

Log.Logger = new LoggerConfiguration()

.WriteTo.Console(new Serilog.Formatting.Json.JsonFormatter())

.CreateLogger();

Log.Information("This is a test log message");

Log.CloseAndFlush();

}

}

Implementing JSON logging in various programming languages and frameworks is both practical and beneficial. These concise examples show how to configure JSON logging, ensuring your logs are structured, readable, and easily parsed by log management tools like SolarWinds Loggly.

Have you ever written code without writing the unit test cases? What happened when you found out you have to write the unit test cases as well? You had to start from the beginning and navigate through every section of your code. After a long effort, you finally finish writing the unit test cases. Avoid this by writing the test cases at the beginning. The same thing applies to JSON logging as well. While writing the code for new software, start inserting JSON logs from the beginning. This will be much easier than writing JSON logs after you’ve completed development.

Our goal with JSON logging is to make our logs highly readable and parseable. If you have a schema with lots of fields, the schema is readable for the human eye. You may miss out some important information if you have a complicated schema in your logs. To avoid that, add only objects in your JSON you’re meant to read. For example, you can encode all your incoming request parameters in a single field. If you send them as individual fields, it’ll only increase the confusion. If you’re using Loggly, you can use json.level to instantly filter and find the log data with warnings or errors.

Deeply nested JSON objects can be hard to parse and query. Flattening these structures by concatenating keys with a separator (like a dot or underscore) can make the logs more accessible. For example, instead of nesting:

{

"user": {

"name": "John",

"age": 30

}

}We can use something like:

{

"user_name": "John",

"user_age": 30

}JSON supports nested JSON objects along with string and numbers. Have a uniform data type in each field to make filtering and searching much easier. For example, suppose you have a field called Age in a JSON. In one of the logs, you wrote the JSON as a string.

Age:”29”

While in another, you wrote the JSON in number format.

Age:54

Wonder what will happen? If you search for the Age field in all the data and put the string filter, you will miss out on all the fields with the data in numerical format. Consistency is critical.

Using appropriate data types in JSON logs ensures that each field is interpreted correctly by log analysis tools. Numeric values should be logged as integers or floats to allow for proper numerical operations like aggregation and comparison. However, timestamps should be logged in a standardized date-time format for precise time-based queries. Proper data typing prevents errors during data processing and analysis, maintains data integrity by validating data during logging, and facilitates integration with other systems and tools. Consistently using correct data types avoids mismatches and additional data transformation steps, ensuring efficient and accurate log analysis.

You can index the fields for a quick search. But remember, think before going on a field creating spree. Too many fields will only increase the noise during navigation. Also, simplify your fields to make them easily readable.

For example, instead of using

{

“Error404” : “Page Not Found”

}you can write

{

“Event”: “Page not found”,

“Error code”: 404

}While creating the fields, don’t think about the present only. Understand your application’s architecture. Think about the logs you need to store after a few months when your code evolves. Start logging the information right now. As a result, when your code size increases and you need the data, you’ll have it readily available.

When you add a new code, include enough details to log errors and their behavior. This will be helpful during debugging or analyzing the error. For example, suppose your project is in the support phase. The customer reports a sudden error. The first thing you do is analyze the logs. If the logs have enough information, your support team can easily find what action led to the error and notify the development team accordingly, leading to quick error fixing.

If you use libraries like log4j, you can capture a program’s name, hostname, and error severity. You can also get the file or class name where the error occurred and current request information. Apart from that, add extra information like user ID, request ID, session ID, etc. This will be beneficial for tracing the transaction. For example, if you’re monitoring logs and notice a security issue or violation, you can easily trace the transaction to its source.

When logging sensitive information, it is crucial to implement strategies to protect this data and comply with privacy regulations. Here are the best practices for handling sensitive information in JSON logs:

{

"user": {

"username": "johndoe",

"password": "******"

}

}

{

"user": {

"username": "johndoe"

// "password" field is omitted

}

}You can use a tool to monitor your JSON logs, making searching and filtering much easier. Loggly offers the following features:

With JSON, you get a standard format to structure data. You can use any programming language for parsing it. Unicode encoding makes JSON accessible universally. Considering the benefits we’ve discussed, JSON logging has much to offer your company. So, follow the best JSON logging practices. Start using Loggly. Try it free for 14 days and monitor applications better.

If you are looking for a holistic observability solution, SolarWinds also offers SolarWinds® Observability; SolarWinds Observability goes beyond log management to offer comprehensive visibility of performance data from cloud-native, on-premise, and hybrid applications, infrastructure, users, and networking devices to accelerate troubleshooting. If you are curious, sign up for a free trial today.

This post was written by Arnab Roy Chowdhury. Arnab is a UI developer by profession and a blogging enthusiast. He has strong expertise in the latest UI/UX trends, project methodologies, testing, and scripting.

Looking to expand your options? Try the SolarWinds Log Visualization