My Guide to Selling Appropriate Instrumentation to Developers

In my last post, I shared some guidelines and best practices that we use at Loggly to do a better job at instrumentation. If you’re responsible for keeping a net-centric application operational, you probably know that even the best code can fail when it meets the real world. In order to keep ahead of potential problems, you need to to be able to understand why something is happening when your code fails. You need instrumentation in your application, and you need a solution like Loggly to surface insights from your instrumentation.

So how do you convince your development team to put appropriate instrumentation into practice? I recently wrote an article on this topic for Developer Tech. Here are the key nuggets.

1. Believing in Appropriate Instrumentation Requires a Mindset Change

The complexity and release cadence of today’s net-centric applications are breaking down traditional boundaries between development and operations. In the past engineers were often shielded from the details of the production environment in which their code lived, either through choice or process. As a result, they viewed the code in production as yesterday’s news. If it’s a little difficult to manage, then that is “not my problem”, or “next release”. Today’s engineers need think differently:

Put as much effort into making sure that the system is easy to manage in production as you do into making sure that it is nicely designed and passes all the unit tests

In the initial design, development and QA phase, the most important question is “is my code doing what the design says it should be doing?” Once it hits production, an equally important question must be asked: “Is the system handling the real world as well as we thought it would?” And you need to be ready to answer that question very very quickly.

Since this is a very open-ended question, one of the best ways to answer it is to use instrumentation to give you visibility into the system. In other words, if you don’t know what questions you’re going to need to answer, make sure you at least have the answers to the questions you do know.

… Typically Learned The Hard Way

Unfortunately, the most zealous converts to the new mindset are usually those developers who have paid the price, losing hours of sleep in wild goose chases after something has gone awry at 2 a.m. Once you see your code fail in ways you never would have anticipated, have a pet belief destroyed, or find that you simply have no idea why something is misbehaving, you’re much more likely to question your other assumptions about how things should behave. And you (hopefully) become a believer in the idea that logging what you DO know is a really good way to discover what you don’t.

In the article, I share an example of how we at Loggly had some of our beliefs shaken when working with Amazon S3.

2. Use Instrumentation to Form the New Wall Between Development and Ops

There are probably as many definitions of DevOps as there are developers and ops people, but as far as I’m concerned, here’s the bottom line:

Your developers should want to know how their code is behaving in production – they should “know the shapes.” Your Ops people should want to know about the internal monitoring, and should be comfortable using it to dig a little deeper than they otherwise could. There should be as few barriers as possible between the two groups. They have different specializations, but the end goal for both should be a smoothly running, high performance, well understood system.

I’ve spent a long time building distributed systems, and I’ve never felt comfortable just handing them over to Ops without first building a suite of internal monitoring tools. Those tools—and the instrumentation that feeds them data—are the key to closing the barrier between development and operations team and forming a new, more flexible wall.

3. Help Everyone Understand What Machine-based Log Management Can Do

Developers may resist instrumentation because they are worried about becoming log watchers. You need to make sure that they are instead thinking about creating logs that are watchable by a machine:

-

Problems become visible before they affect every user

-

The logs contain all of the data necessary to identify causes of failure

-

The logs contain performance data for easy analytics

Logs as prose have a long tradition in software engineering. On the other hand, machine-readable logs may look “cluttered” to developers in their raw form. So here’s how I suggest changing their minds: show them a graph of your application’s latency, generated with a couple of mouse clicks rather than a grep | sed | sort | awk| gnuplot pipeline that always goes wrong the first 3 times. I promise that they will quickly see the value. Add some alerts, and they’ll realize they don’t have to sit and tail files to see what is going on in the system.

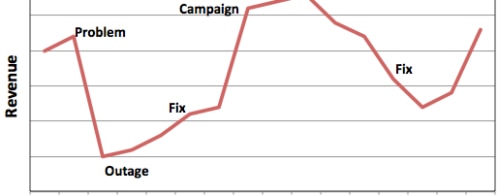

With a better understanding of how log management solution like Loggly can clean up the picture, they will be more eager to adopt my best practices. Eventually, they’ll wonder how they ever survived without it. And then I bet you’ll see some positive feedback loops forming.

4. Use Trending to Look Forward, Not Just Back

The value of log data is not just in understanding what has already happened, but in guiding future action. By using log metrics to guide planning, you’ll embed best-practice instrumentation more deeply into your organizational culture.

At Loggly, we use a wide range of metrics for every part of our system—data volumes, flow through each component, indexing rate, search latency, and more—to guide our growth planning. It’s reassuring to make decisions based on real data, not idealized tests that don’t always reflect the complexity of our production service.

My Final Words for Today

The real world is full of the unexpected. If you want to survive and thrive as a net-centric business, you need instrumentation that gives you a deep understanding of what’s going on in your system, not just what you think should be going on. I hope that this post (and my article) can convince your team without the painful experience of a 2 a.m. disaster. So share it!!

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Jon Gifford