Kubernetes 1.7 auditing with Loggly

As organizations start building production-grade Kubernetes clusters, security has emerged as one of the big issues to be addressed. This is true whether you are a startup that is becoming serious about change control processes or an enterprise company already implementing best practices but unsure how to replicate them in a Kubernetes-like environment. Once you have established enough services as well as kubectl users, you will want to have some visibility over actions users are performing in your cluster. In this article, we’ll show you how to:

- Get started with Kubernetes audit logs

- Create a user

- Centralize Kubernetes logs for visibility and monitoring

- Create alerts

- Limit log noise

Getting started

Prerequisites:

- OpenSSL

- Minikube

- Kubectl

To begin you must be on a Kubernetes 1.7 cluster. For simplicity we will use a minikube cluster on our own machine.

minikube start --kubernetes-version=v1.7.5 --extra-config=apiserver.Authorization.Mode=RBAC --extra-config=apiserver.Audit.LogOptions.Path=/var/log/kube-apiserver-audit.log

This will allow us to see the audit logs on the host machine and view them like so:

minikube ssh "sudo tail -f -n 10 /var/log/kube-apiserver-audit.log

It will also allow the Fluentd image to consume the logs at this location and store them inside of Loggly.

Centralizing Kubernetes logs for visibility and monitoring

In this example, we’ll use Loggly to centralize our Kubernetes audit logs. There are two ways we can send these logs. If you are already sending your Kubernetes API logs, then you will automatically be capturing them after you enable AuditLogs. Otherwise you have the following two options:

-

Send all API logs.

- This blog shows how this works for API logs, and the project itself has been expanded to now support the new audit logs. All you need to do is check out the project here and change the token provided by your Loggly account on this line.

- Due to role-based access control (RBAC) you will also have to give the Fluentd container enough privileges to consume the Kubernetes API logs. To do this, deploy this file to your Kubernetes cluster.

-

Send only audit logs via the audit backend webhooks.

- This is currently an alpha feature and does not quite work as intended, but hopefully in the future we’ll be able to set a webhook to post batch logs to an HTTP endpoint, using the Advanced Auditing feature. This will allow us to only audit the logs to Loggly vs. all API logs.

- Get your unique HTTPS URL from Loggly here:

https://{accountname}.loggly.com/sources/setup/https - Set up a kubecfg-like file to connect to Loggly

https://gist.github.com/feelobot/10ee9cb094500c1d4ab32e5f56fe0bb5 - Enable the feature and configure how to deliver the log messages

minikube start --kubernetes-version=1.7.5 --extra-config=apiserver.Audit.WebhookOptions.ConfigFile=/etc/kubernetes/webhook.yaml --extra-config=apiserver.Audit.WebhookOptions.Mode=batch --feature-gates=AdvancedAuditing=true

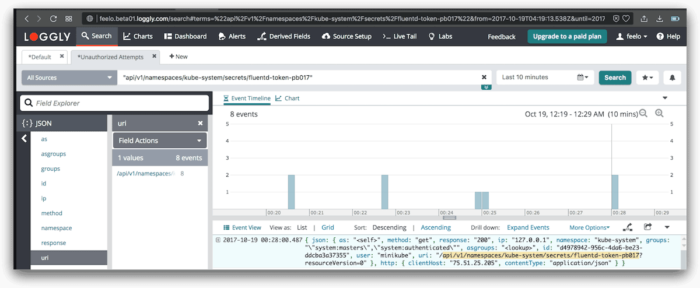

Creating an unauthorized user

We will now create a limited user with no permissions. This approach will prevent the user from describing and revealing Kubernetes secrets so we can alert on unauthorized access attempts to secrets.

- Create keys and certs for a new user.

openssl genrsa -out ~/.kube/unauthorized.key 2048openssl req -new -key ~/.kube/unauthorized.key -out ~/.kube/unauthorized.csr -subj "/CN=unauthorized/O=minikube"openssl x509 -req -in ~/.kube/unauthorized.csr -CA ~/.minikube/ca.crt -CAkey ~/.minikube/ca.key -CAcreateserial -out ~/.kube/unauthorized.crt -days 500

- Create a context using this new user.

cd ~/ && kubectl config set-credentials unauthorized --client-certificate=.kube/unauthorized.crt --client-key=.kube/unauthorized.keykubectl config set-context unauthorized-context --cluster=minikube --namespace=default --user=unauthorized

- Try to access secrets.

kubectl --context=unauthorized-context get secrets

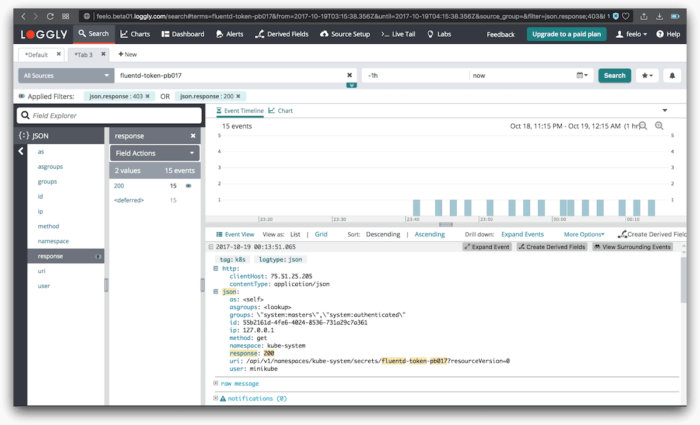

Setting up useful alerts

You may want to create alerts on:

- User edits a secret:

- User execs into a container

- This unfortunately can not be tracked through audit logs yet either. We only get an idea that a user is performing tasks on pods but without enough details to see what pods or what actions are being performed.

- Alerting on Jenkins vs. non-Jenkins activity

- This depends on how you are running Jenkins (i.e., in the cluster or outside the cluster). If you are running it outside the cluster than we can create a user just like we did above, but with enough access to do all the things Jenkins needs to do. See the Kubernetes docs for more information about setting specific RBAC roles.

- If you are running Jenkins as a deployment within Kubernetes, you can attribute a specific Service Account to your deployment so all actions will show up with Jenkins in the

groupsfield in the JSON logs.

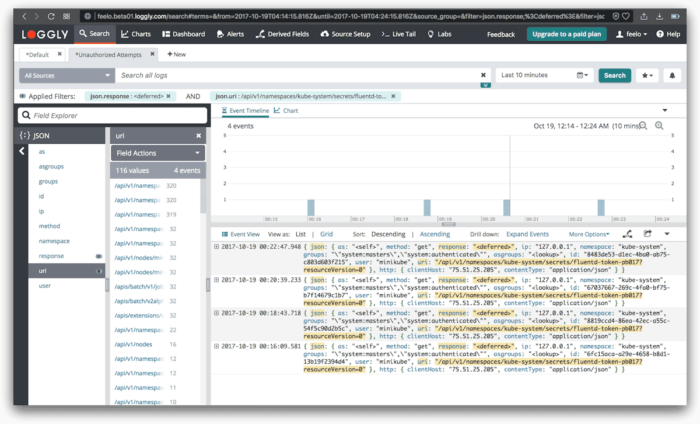

Limiting log noise

If cost is a concern or there are just too many logs coming in that are of zero interest, you can filter them from ever being sent. Google has a pretty detailed Audit Policy that restricts a lot of things it doesn’t want to see in its logging backend. Google’s full policy can be found here. A non-templated, rendered version can be found here.

How to deploy

Deploying your audit policy is pretty straightforward, but because this is an alpha feature you will need to enable the feature on startup, as well as provide the path to your policy file.

- Minikube:

minikube start --kubernetes-version=v1.7.5 --extra-config=apiserver.Authorization.Mode=RBAC --extra-config=apiserver.Audit.LogOptions.Path=/var/log/kube-apiserver-audit.log --extra-config=apiserver.Audit.PolicyFile=/etc/kubernetes/audit-policy.yaml --feature-gates=AdvancedAuditing=true- Make sure to add ssh into the host machine to add your policy file to the path you provided using

minikube ssh

- Kubernetes:

- Enable the following feature flags

--feature-gates=AdvancedAuditing=true--audit-policy-file=/etc/kubernetes/audit-policy.yaml

- Enable the following feature flags

Results

A new cluster that I launched went from ~300 events per minute to ~200 events per minute using the GCE policy while still getting all the details that I needed to monitor the cluster above.

Summary

While auditing is still a very new feature for Kubernetes, we can now begin taking advantage using a central logging service such as Loggly to view any anomalies or suspicious activities in your Kubernetes cluster. It’s easy to launch a Kubernetes pod into our local environment to begin collecting and shipping our logs to such a service. Demonstrating a bit of role-based access control to illustrate the different type of requests we should see in our event logs. We saw how alerting can address emerging security needs with respect to Kubernetes and how filtering limits log noise. Hopefully this post will give you some missing tools for tackling any auditing requirements you have now or in the future.

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Felix Rodriguez