Loggly Q&A: Lloyd Taylor talks DevOps best practices

Lloyd Taylor, former VP of Operations for LinkedIn, is an investor and advisor to select startups including Loggly and Samasource. Taylor’s career includes many senior IT operations roles including Director of Global Operations for Google, where he developed, implemented and operated an infinitely scalable physical infrastructure for Google’s server farms. Lloyd sat down with Loggly to talk about DevOps best practices.

1. You’ve been working in Internet Operations for over three decades. What do you think are key practices for DevOps, IT Ops, and IT management?

The most important thing is to deeply understand how Operations directly supports the overall mission and goals of the organization. It’s easy to fall into an ‘us vs. them’ mentality since IT ends up with responsibility for operational excellence, regardless of the quality of the product design or engineering work that goes into the product or service.

Consistently behaving in a way that demonstrably supports the success of the organization is critical for the IT/DevOps teams to be supported and respected by the business. Only by building trust and credibility can the teams earn the right to influence the product/engineering lifecycle.

2. What is the secret sauce that top social/Web properties possess for DevOps? And for Development?

Firstly, intense collaboration. Product, Engineering, and Operations all have valuable insights into how a new product or service needs to be designed, built, and operated to be successful. The traditional waterfall approach – where Product designs something, hands it off to Engineering to be built, who in turns hands it off to Operations for deployment – leads to systems that are brittle and scale poorly.

Secondly, both Engineering and Operations/Infrastructure must have a seat at the Product Council table. Getting both insights and buy-in from people who are experts in product design, engineering implementation, and infrastructure deployment helps ensure that the system will be resilient and scalable.

3. How important is log file analysis in the equation?

Simple and complete access to development and production log files is critical for maintaining system uptime and availability. While some limitations are important (for example, limiting access to security event log files to protect separation of duties), allowing the broadest possible access to all members of the product/engineering/operations teams greatly improves MTTR when there is a problem and offers opportunities to find bugs and optimize systems during normal operations. This transparency also helps build trust and makes it easier for cross-functional groups to collaborate.

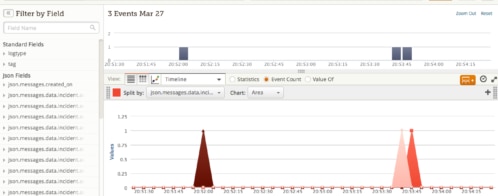

Centralizing logs, and applying machine-learning algorithms to help correlate events and incidents, makes it much easier to quickly find issues and problems. The ability to run complex reporting analyses to uncover subtle problems and potentially damaging trends greatly increases the value of the log files.

4. What has the advent of cloud-based log management services done for IT departments?

Setting up log management and analysis is a complex problem. While it seems straightforward to implement an open-source ELK (ElasticSearch/Logstash/Kibana) instance, the ongoing management and tuning is a significant burden. In addition, extracting the most value from the log information requires extensive coding and machine learning work.

Using a cloud-based log management service allows one to quickly implement an advanced system, and leverages the common issues experienced by thousands of other users. The provider’s tools and analysis systems can save immeasurable amounts of time, and quickly provide valuable insight into how your system is operating.

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Lloyd Taylor