Logging in New-Style Daemons with systemd

With new-style daemons, systemd has introduced a new way of writing daemons that vastly simplifies the process for programmers compared to the old approach. One part of this new approach is the way new-style daemons can handle logging. Instead of worrying about the many complexities involved in logging, such daemons are encouraged to simply use standard output for log messages. systemd handles the rest.

Correct Logging Is Complicated

In the past, programmers implementing logging for their applications were faced with a large number of questions and problems to solve.

If logging is meant to go to a file, the application needs configuration options as to where these files should go, and it must make sure to create any underlying directories with the correct permissions. Such log files need to be rotated and compressed regularly to avoid huge files and wasted space. The application can do this itself, or use external tools like logrotate. When the latter is used, the application needs to provide a way of handling log files being changed while they are open and written to.

Just using syslog is a lot simpler but still poses questions. By default, some logging libraries simply use the UDP port, but that might not be available. Instead, sometimes it is necessary to use /dev/log instead. In either case, the application has to set up a connection and format all log messages according to the syslog protocol.

With modern centralized logging solutions like Loggly, application developers might even get asked to provide ways of sending logs directly there.

Whichever destination is chosen, though, there is a time between program start and when logging is configured correctly where the daemon cannot actually notify the admin of problems and it is impossible to emit log messages. How would the program notify the admin that it is unable to read the config file that says where to log to, or that it cannot write to the log file, or that there was an error connecting to syslog?

While libraries exist to make most of this a little less painful, this still adds to the complexity of system daemons, their configuration, and their failure modes.

Treat Each Output Line as an Event

There is no need for any of this complexity, though. Modern application designs like The Twelve-Factor App recommend to simply emit log messages as event streams to standard output. Applications do not need to concern themselves with log files, file locations, rotation, syslog ports, or socket locations, and as standard output is available from the very moment the program starts, log messages will never be lost.

The protocol for this log emission is as simple as it could be: Every line is one log message. The process manager is responsible for routing these logs where they should go. systemd, for example, captures output and forwards it to journald and syslog where it can be processed further.

There are two possible gotchas with this approach that applications have to be aware of, though.

First, one event per line means that multi-line messages are not treated as one event. This is not unusual, but especially for backtraces this is important to keep in mind. Newlines in such events still have to be escaped somehow.

Second, this does not allow the application to specify log levels per event emitted. systemd has a small extension to allow this, though. Much like in the syslog protocol, applications can prefix each line with angle brackets and a number to specify the log level:

<7>This is a DEBUG level message <6>This is an INFO level message <5>This is a NOTICE level message <4>This is a WARNING level message <3>This is an ERR level message <2>This is a CRIT level message <1>This is an ALERT level message <0>This is an EMERG level message

If the prefix is missing, systemd just uses a configurable default (INFO, usually), so even this minimal complexity is only necessary if the application needs it.

The Remaining Configuration Is Done in systemd

Simply writing to standard output is all the application has to do. Everything else can be configured in the service file of systemd, as documented in systemd.exec(5). Luckily, all of the options have sensible defaults, so usually there is no need to configure anything at all to make use of this feature. And by keeping both the code to do the log handling, as well as the configuration in systemd, all applications can benefit from this and be simplified a lot.

A Daemon Is Now Trivial to Write

Thanks to moving all of the recurring logic of writing daemons into systemd, even a shell script can serve as a daemon. Let’s create the following script as /usr/local/bin/test-daemon.sh:

#!/bin/sh i=0 while sleep 1 do echo "<$i>I'm alive!" i=$(((i+1)%8)) done

This is all there is to our daemon. It will emit its cute message, once per second, cycling through all log level prefixes. To hook this up to systemd, we just need a two-line service file. Create /etc/systemd/system/test.service with these lines:

[Service] ExecStart=/usr/local/bin/test-daemon.sh

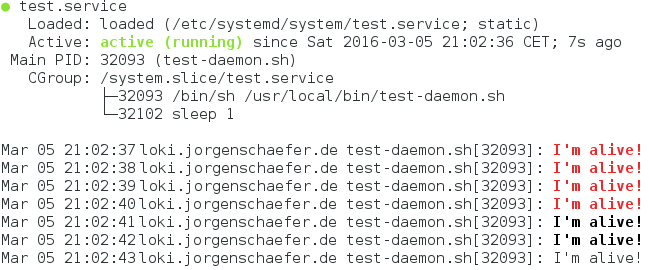

That’s it. systemctl daemon-reload will make systemd find the new service file, and systemctl start test will then start it. You can check that it is running with systemctl status test:

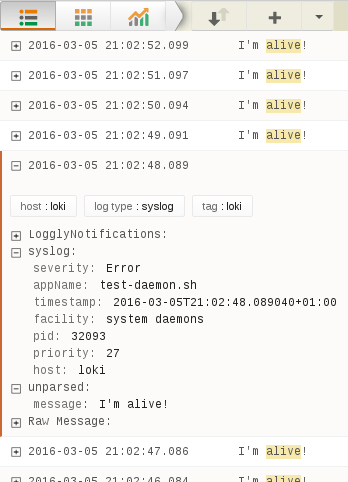

The output goes through systemd to journald, which is why we see it in status and even get colors to mark messages with higher severities. journald also automatically forwards this to syslog, so we can see our daemon in our log monitoring.

In a sense, we have attached the output of our program directly to Loggly.

Our minimal daemon is already fully integrated with the system without using any special libraries, configuration options, or having to worry about cleaning up log files.

With two more lines in the service file, we can enable this daemon to automatically run at system startup. Another line adds monitoring, so the process will be automatically restarted when it fails.

Application Development Simplified

By using this approach, daemons can be extremely simplified, both in regards to code complexity as well as the required configuration options. Thanks to systemd’s configuration options, all features of syslog, like the facility or application name, can still be used when required. And because all log messages go through the same channels, they can be easily filtered and routed.

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Jorgen Schäfer