New Log Types Supported: Rails, Nginx, AWS S3 and Logstash

Loggly is always making it easier to bring in new types of log data. While Loggly can collect and manage any kind of text-based logs (and all without an agent), we offer a growing list of guides with step-by-step instructions for the most common log types. I’m excited to share a few details on some new supported log types: Rails, Nginx, AWS S3 logging, and Logstash custom parsing.

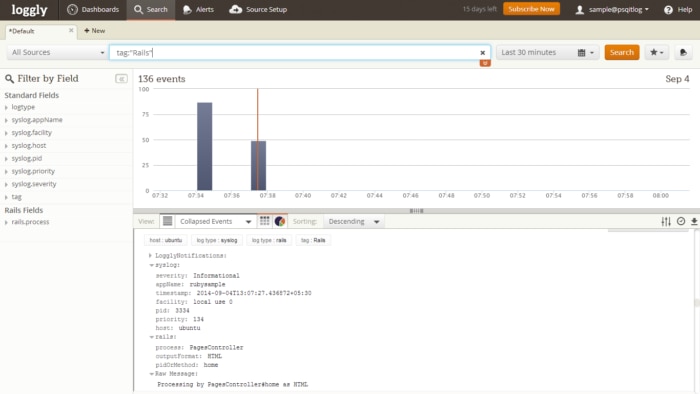

Ruby on Rails

Ruby on Rails is one of most popular web development frameworks. Loggly parses specific types of Rails logs, enabling point-and-click filtering and charting. Previously, Loggly offered a Ruby Gem that would send logs over HTTP. Now we also have instructions for a Syslogger Gem that lets you send these logs over syslog.

Using the Syslogger Gem offers several advantages:

- Since the Syslogger Gem makes use of our syslog daemon, it runs as a separate process from your web server and won’t slow your web server down. The Ruby gem can also send logs asynchronously but it still runs within the same process.

- You can centralize your Ruby logs on the local side before sending them to Loggly. This allows you to do some filtering if desired. (If you’re not a syslog master, this recent post by my colleague Ivan Tam will give you the key concepts you need to know.)

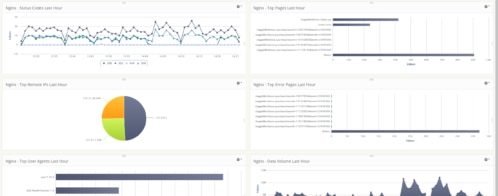

Nginx Script

We have also added a script for the nginx web server. Similar to our Apache and Linux log setup scripts, the script allows you to send and analyze Nginx logs to loggly in a single command. This saves you from having change configuration files, and it can verify logs are successfully sent.

AWS S3

AWS S3 has become a very common way of storing files and data in AWS environments. Many AWS applications write logs directly to customers’ S3 buckets. Now, you can easily read data from those buckets and send it to Loggly for easier monitoring and analysis.

We made a beta version available of a script that synchronizes the items inside your S3 bucket to your local computer and configures rsyslog to send them to Loggly. The script also has a cron that will synch your logs every five minutes. While the script doesn’t yet support compressed files or new files being added to bucket, we expect to add more features into the GA release.

Logstash Custom Parsing

Many customers have logs in custom formats. Our Logstash custom parsing guide offers a way to send those logs to Loggly in a format that we can parse and use for filtering, trend graphs, and more.

In our documentation, you’ll now find a guide on how to use Grok to extract fields from custom log formats and send the logs to Loggly as JSON. Grok supports hundreds of different formats, including unique path names, time stamps, IP addresses, and usernames. It can also parse HAproxy, Redis, and PostgreSQL, among others.

Learn More About All of These Log Types

Our Support Center has a ton of new information and guides. If you haven’t been there in a while, it’s time to pay a visit. If you still haven’t signed up for a free trial of Loggly, now is a great time!

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Jason Skowronski