Search 101

In a world where search is everywhere and in a part of the world where everyone seems to be tech-savvy, you would think that everyone would have at least a basic understanding of the principles of search technology. And this is true, but only up to a point.

Every now and then, I give a talk called Search 101 to new people here at Loggly. I go over the basics of search, and then go a level or two deeper so that people can see the strengths and weaknesses of the technology that is so much a part of what we do. The idea is to get everyone in the company familiar with what we can do with search, so that we can do a better job of explaining why our product works the way it does to our customers and also do a better job of surfacing the cool stuff this technology gives us. To illustrate these concepts, I give a few examples that show how search has been either a big win or where it is simply the wrong technology for the problem.

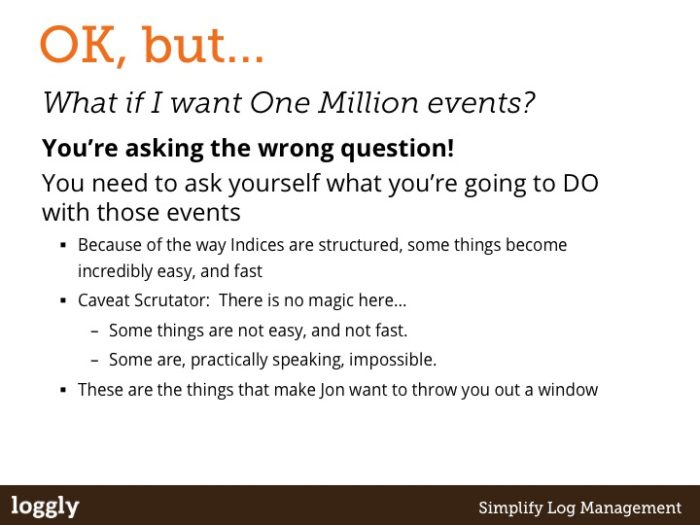

When we started the company, I would threaten to jump out a window if someone proposed a product idea that could only be implemented using one of those weaknesses. This turned into a running gag, and in the first version of this talk, I subtitled it “How do we stop Jon jumping out the window?” As time went by, the threat of me jumping out a window diminished to the point where it was a completely idle threat (some would say an incentive). So I changed the threat: now, I will throw you out the window if you persist in asking these types of question. 😉

Although this is a fairly wordy and decidedly plain deck (I’m an Engineer, not a Marketer 😉 ) I typically talk around the content, to provide more depth for each slide. What follows is a blog version of that…

The Basics

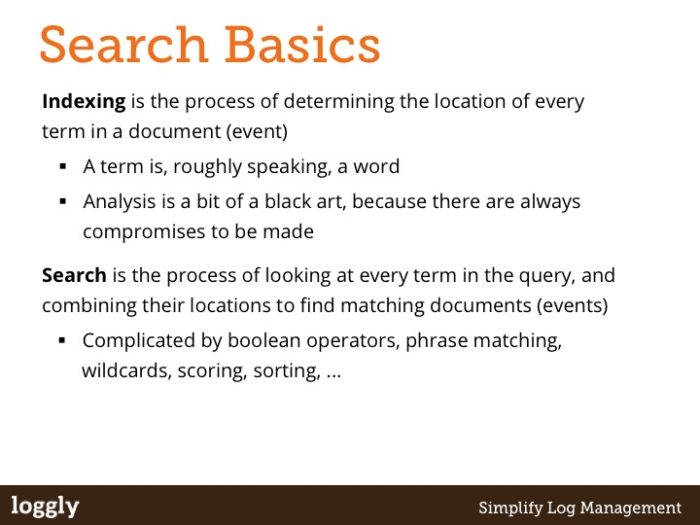

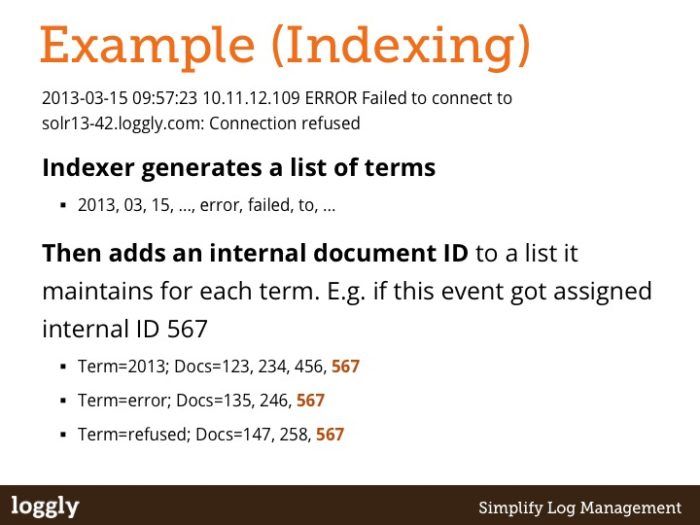

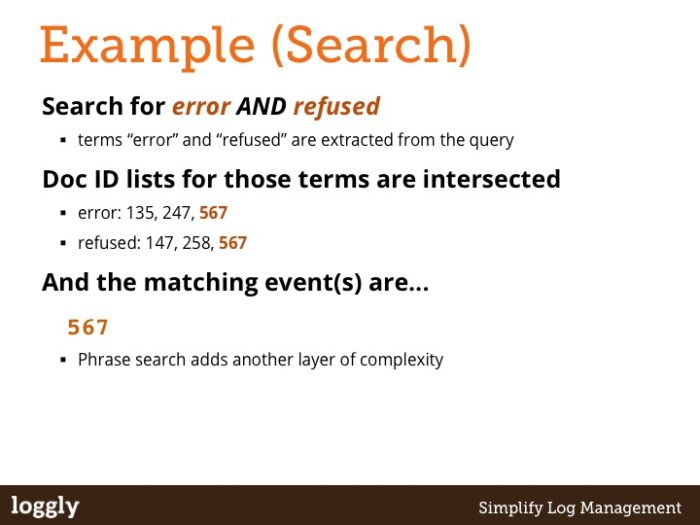

Slides 2-4 are the simplest possible description of indexing and search and hopefully are fairly self-evident.

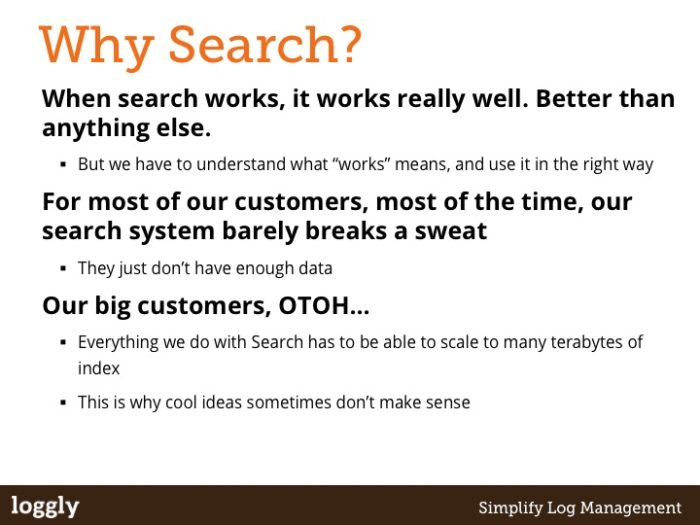

Slide 5 is to answer the obvious questions that people coming from a unix or RDBMS background might have about why search is even useful in the first place. Fundamentally, we get the benefits of aggregated data plus the power of a much more flexible tool and thus can beat both of these alternate approaches for some very important problems.

Slide 6 talks to the most commonly asked for thing that is a real weakness in search: It’s not designed for the same kinds of problems that you might solve with a database or hadoop. If those are the questions you have to answer, then search is probably not going to be your friend. There are plenty of other better tools out there, and you should choose one of them instead. For the questions we want to answer in our product, however, Search does a darn fine job.

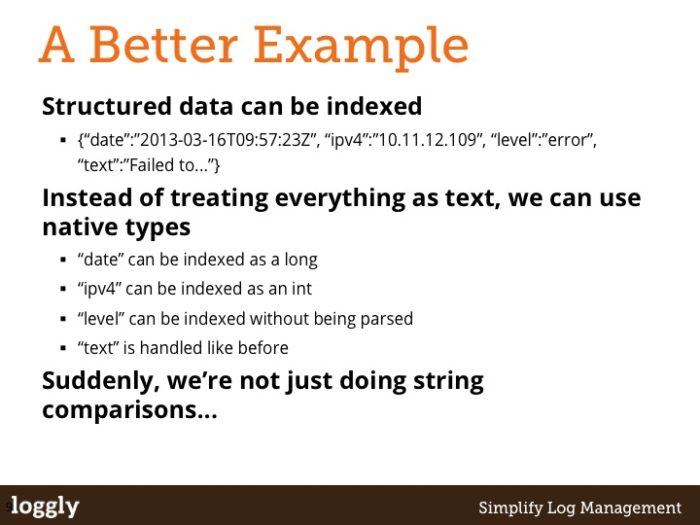

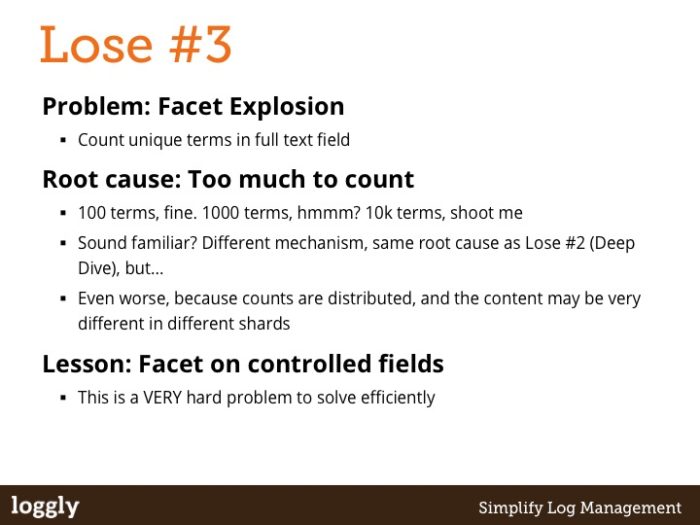

Slide 7 shows that Search is not just about string matching or full-text search. There have been a lot of recent advances in Lucene that make it possible to do some fairly sophisticated numeric manipulation that was simply not possible even three years ago. It also demonstrates that not all content has to be treated equally in a single document. Some values should be indexed without any complex analysis, for example fields that you might want to facet on.

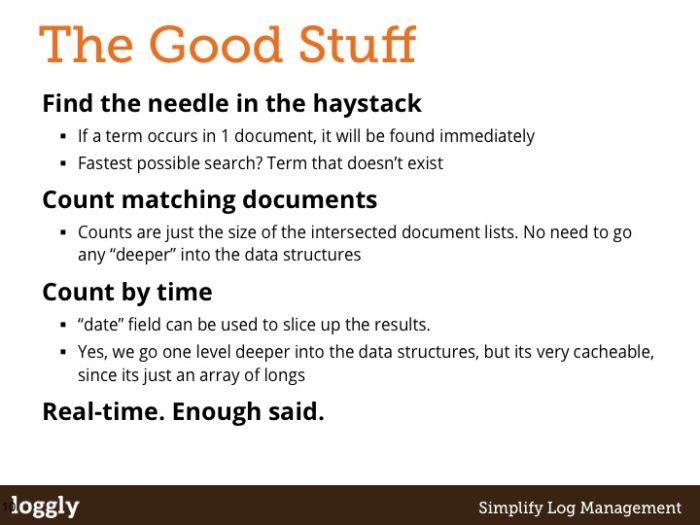

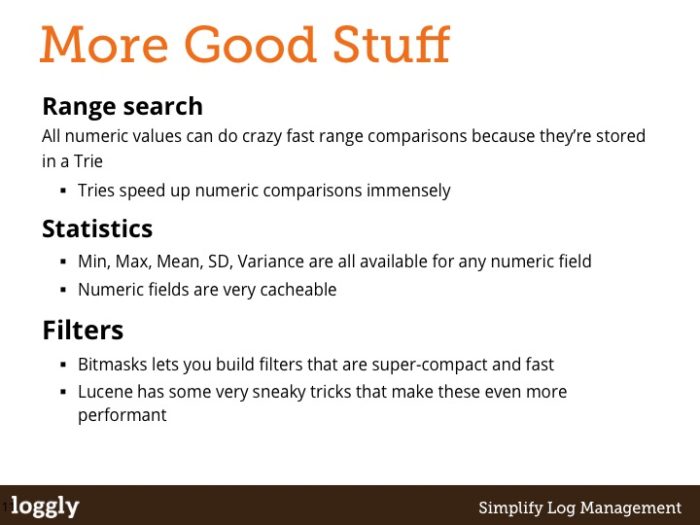

Slides 8 and 9 run through some of the stuff that work really well in search. Numerics, as hinted at above, can be used to generate statistical data that you would previously have needed to use awk or a spreadsheet or even a database to get. Counts are probably the cheapest thing you can do in search, because you can get them directly from the top level of the index without even needing to “dive into” the documents. All of this is good stuff, and stuff that we can use without worry.

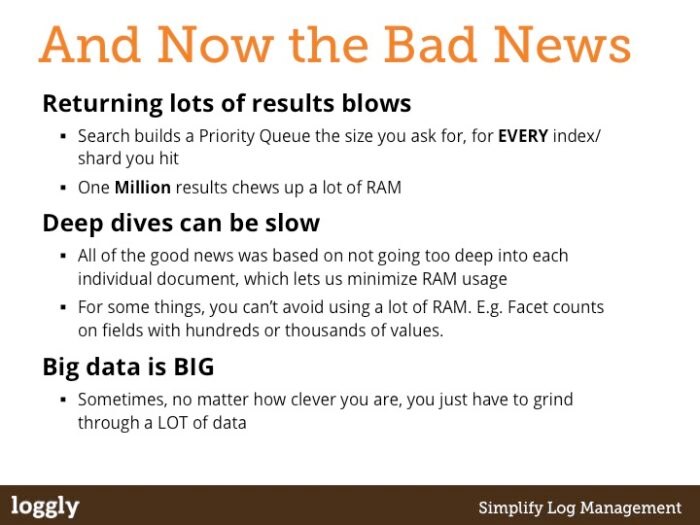

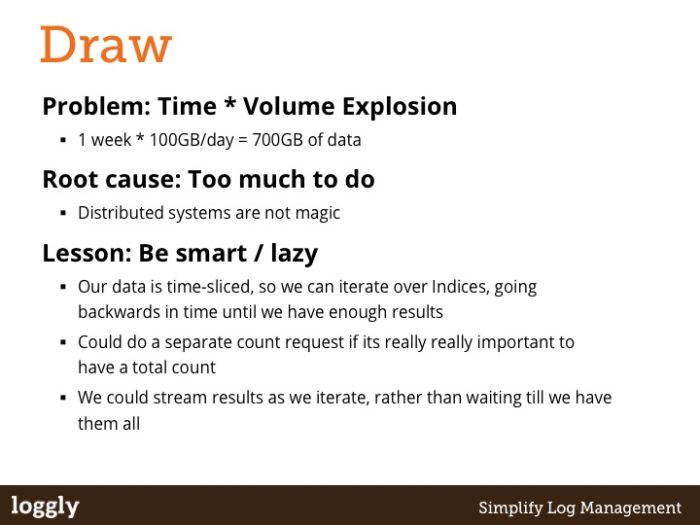

Slides 10 and 11 are the splash of cold water… The bad news boils down to something that is hard to argue with: Mathematics. When you try and do a lot of work, it takes time. There is no pixie dust that can solve that for you. And yet, in the face of that, everyone I’ve ever worked with in search still believes that speed matters and that it’s worth figuring out where those few tens of milliseconds are spent. We’re never satisfied with the performance we have, and when we say “performance will be terrible” our frame of reference is usually an order of magnitude off that of the people we’re talking to. It is our curse.

There is a nursery rhyme from my childhood in New Zealand. The number of people who have heard it in San Francisco is surprisingly low, so its impact is a little lost. But I like it so much I can’t remove it. The point is this: Search is like the little Girl. When its good, it is far far better than anything else, but when its bad, it is truly, deeply, profoundly horrid. It goes like this:

There was a little Girl

Who had a little curl

Right in the middle of her foreheadWhen she was good

She was very very good

But when she was bad

She was horrid

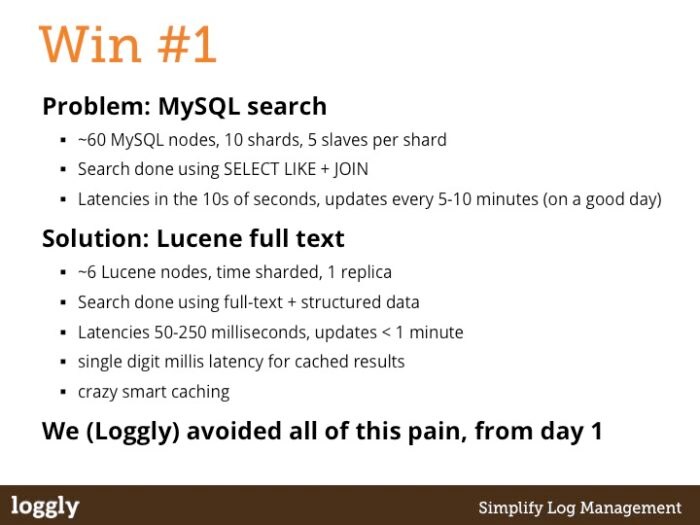

The next few slides are examples of search being that little girl. Each one is a real life example that show just how good or horrid your life can be. Hopefully each is fairly self-explanatory, and assuming the previous slides made sense, each of these should also make sense.

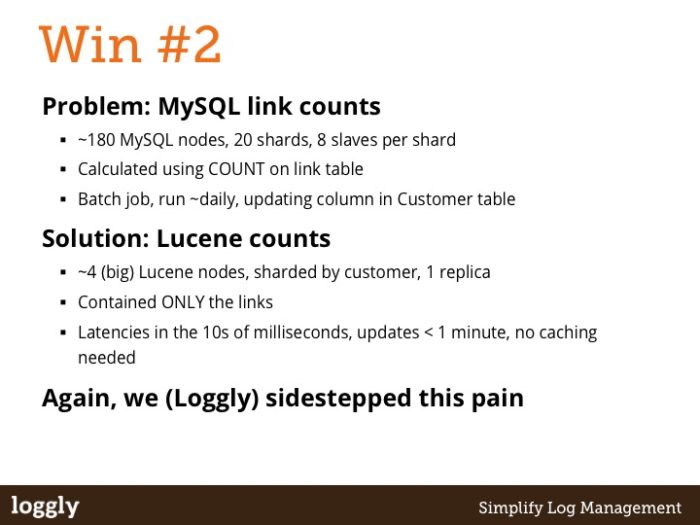

My favorite is the link counting example, partly because it is the biggest bang for the buck, but also because it was incredibly easy to do. When we rolled it into production it felt like we’d just upgraded from a pedal-car to a Bugatti Veyron – the difference was staggering.

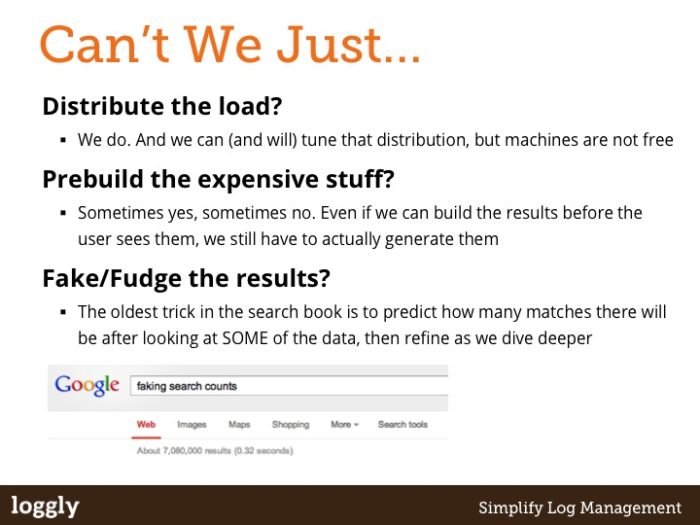

Slide 20 talks about some of the things we can do to get around some of the limitations in the previous slides. These are some of the standard tricks of the trade for search systems, and its always interesting to talk about the last one. Essentially what its saying is “when you can’t (or don’t want to) do all of the work, do enough to be able to lie convincingly”. In our current system, we do do all of the work, so we don’t have to use this trick. But its always fun to point out that even The Google “lies”. Go try it yourself – see how many pages deep you can go into a set of results, and watch the number of results change as you do. This works best when there are only a few thousand results, so play around till you have a query that gives you that many results. Here’s one to get you started: https://lmgtfy.com/?q=%22google+result+counts%22

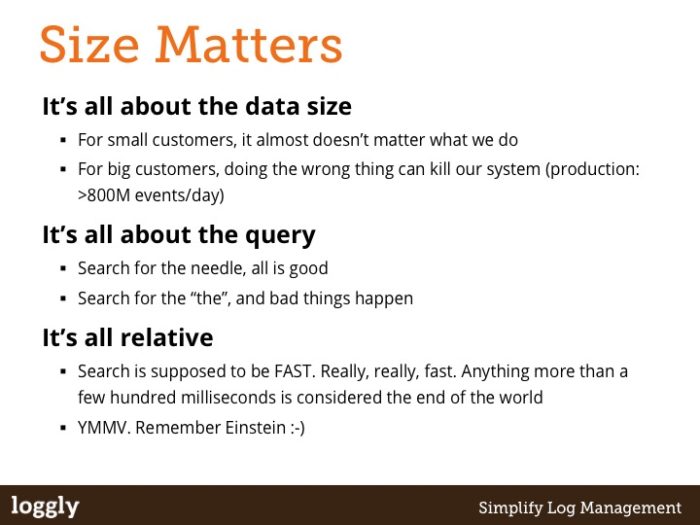

Slide 21 is another recap; I guess I like to repeat myself. Or maybe I just like to say the same thing in different ways. The nugget here though is the last point: it is really easy to build a system that does something cool when you’re dealing with a few million documents. But when you’re dealing with billions, you have to understand what you’re trying to do at a deeper level if you really want it to work. And the sad reality is that sometimes something that looks like it’ll work in a prototype will just never scale to where it needs to be for it to be useful for our customers. This is a big part of what makes the work we do here fun, because there are some really really cool ideas for new features that we would really love to do, if we can just figure out how to make them scale!

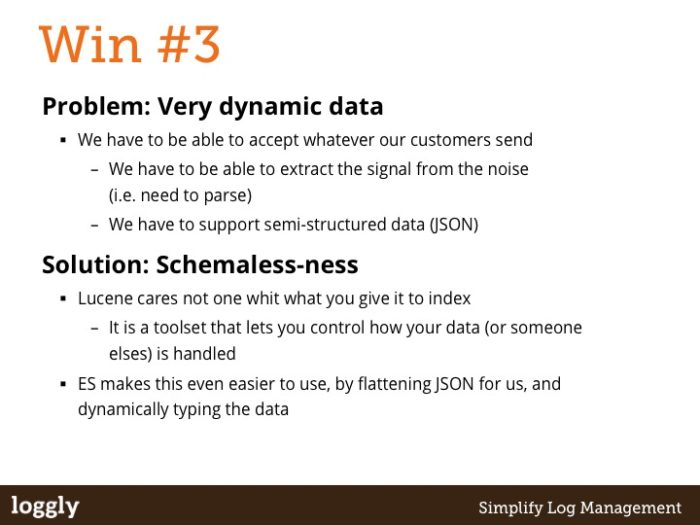

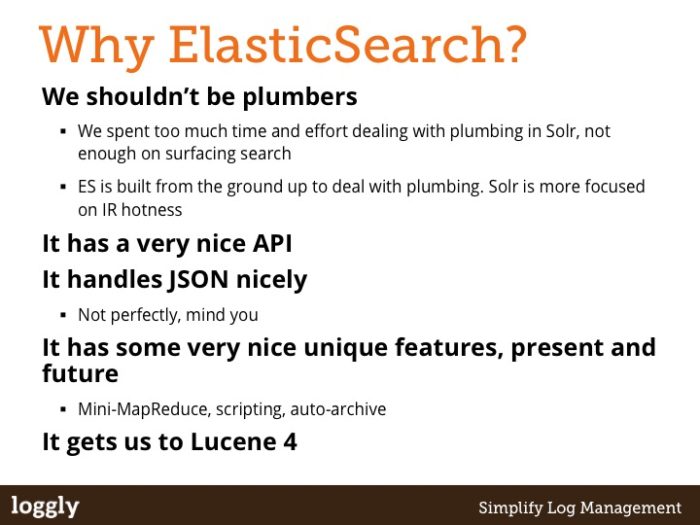

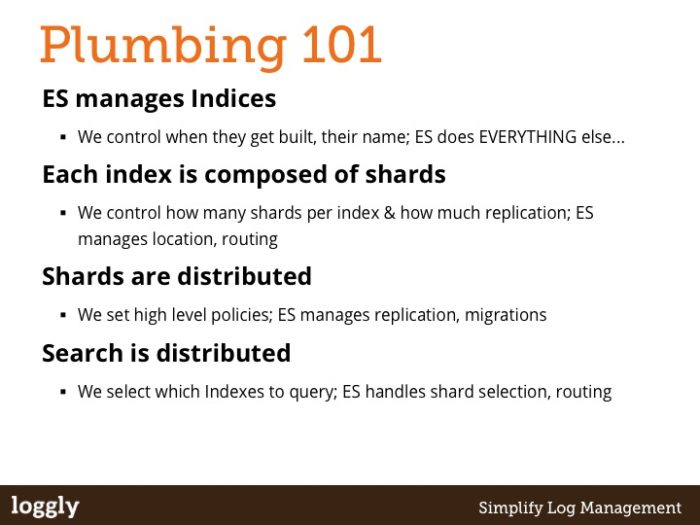

The last two slides cover the big reasons why we went with ElasticSearch, and a little bit on how it does what it does. Its a core part of our stack, so telling people a little bit about it is usually useful, even if they never get to touch it themselves.

So, thats the end of the deck.

But its just the start of something far more interesting.

The best part of doing this presentation is always the discussion we have afterwards, where people who have gained a new understanding of what is really going on under the covers come up with some truly crazy ideas (in the best possible sense of that word). I’ve never done this presentation without getting a fresh perspective on what we could be doing, and thats the beauty of talking about technically interesting stuff with smart people. I can’t speak for the people who attend, but its worth doing for me simply because of those discussions.

I hope you got something out of it too 🙂

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Jon Gifford