Sending CoreOS logs to Loggly

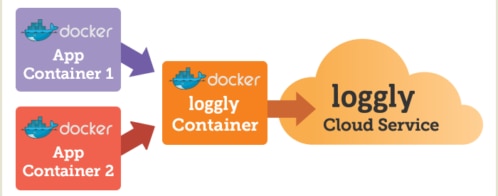

There are many ways to send logs to Loggly from a Docker container environment:

- Using a Loggly Docker container

- My sidecar approach

- Using logspout

All of these methods will work on CoreOS, but I have an even simpler way for you, one that doesn’t require you to run another container to send logs to Loggly. This blog will show you how to bind a lightweight logging unit to any process you start on a CoreOS cluster using the built-in CoreOS tool: Fleet. Fleet is a standard tool for controlling units (Docker processes) on a CoreOS cluster.

As with the other two solutions, the approach I describe will not maintain persistence of data when there is a network outage. This may or may not be important to you.

Here is an example that assumes we started up a Fleet unit named “nginx.service” and that this nginx service is a global unit which means it is on every machine on the cluster. Given that, we can start up our logging unit to get the logs out of each of those “nginx.service” units and send them over to Loggly.

Here is the Fleet unit file: logging-nginx.service

| [Unit]Description=nginx-loggingAfter=docker.service [Service]EnvironmentFile=/etc/environmentEnvironmentFile=/etc/stackNameTimeoutStartSec=0User=core Restart=always RestartSec=10 Global=true |

Start the logging unit by issuing this command on the CoreOS cluster:

| fleetctl start nginx-logging.service |

Let’s go through the “ExecStart” section to see what is going on. The rest is fairly standard Fleet usage. The command consists of three sections.

The first section –“/usr/bin/journalctl -u nginx.service -o short-iso -f” – tails the log for the “nginx.service” unit.

Then this is piped to the next section: “/usr/bin/awk \'{ print "<34>1", $1, $2, $3, " - - ", "[<LOGGLY_KEY>@41058 tag=\’${LOGGLY_TAG_SERVICE_NAME}\’ tag=\’${STACK_NAME}\’]”, $0; fflush(); }\’ “. This section looks complicated but basically it is putting the log in a format to be sent to Loggly which includes your Loggly customer token. The red section should be replaced by your own Loggly customer token. Tags for Loggly are also added here. One tag is sourced from the CoreOS cluster’s environment file so that the environment name is included and the service this log belongs to is also added.

Here is the file content of the “stackName” file that the unit file sources.

File: /etc/stackName

| STACK_NAME=”my-stack” |

Then all of that is finally piped to the last statement: “/usr/bin/ncat --ssl logs-01.loggly.com 6514”. This statement uses the simple but very effective “ncat” tool to send the log via SSL to Loggly. This would send it over reliable TCP and through an encrypted channel. (Most syslog solutions use UDP, a much less reliable transport; if the packet is lost, there is no retransmission of it. Sending it over UDP can lead to lost log events. You can read more about UDP versus TCP here.)

The method described in this post is a quick and very efficient way to send logs to Loggly from Docker containers on a CoreOS cluster. The reason why we like using this method is because we don’t have to start a syslog container just to ship logs out. We can use the native cluster facilities to help us ship the logs to Loggly.

Additional Reading

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Garland Kan