Five Ways That qbeats Uses Loggly to Gain Immediate Insight from Python and Nginx Logging

When you have a problem with your application, how important is it to get answers fast? For our customer qbeats, lightning-speed log analysis is critical because the company’s platform provides time-sensitive content for equities and commodities traders. Even a delay of a few minutes could draw user complaints, big monetary losses, or worse: brand defection.

In our newest case study, Maksym Markov, VP of engineering at qbeats, explains how they use Loggly and why it’s so effective for the 39-person team in development and DevOps.

qbeats is a technology platform that analyzes the value of time-sensitive content and matches publishers to readers. It uses award-winning, patent-pending technology to price content dynamically based on demand, market signals, and impact and to match it to the relevant audience. The platform consists of approximately 20 separate services that provide content analysis and dynamic pricing as well as a service that pushes the right content to the right readers. It’s deployed as elastic balancing groups in the AWS cloud.

Loggly manages a combination of text and JSON logs including:

- Application logs, mostly Python logs but also Java and C++

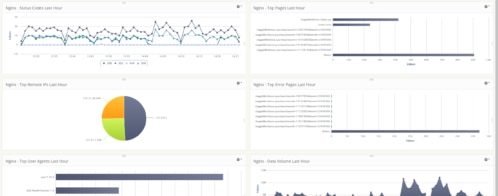

- Nginx logs

- Audit logs from AWS services

The case study describes five ways that qbeats uses Loggly and gains immediate insights with Loggly Dynamic Field Explorer™ and alerts.

Five Ways to Gain Insight

1) Providing guidance on where to look for problems

When the qbeats team is trying to solve operational problems, Field Explorer is the first step in problem isolation. The team then can create more targeted searches since they have a good idea of where they need to look. In addition, Field Explorer’s visual views help the team differentiate “normal” spikes in activity from abnormal ones.

2) Troubleshooting cross-application, cross-server issues

qbeats tags each event with its source host and model and a unique identifier for each piece of content. As a result, the development team can use its logs to watch a piece of content passing through each qbeats service simply by searching on the identifier.

3) Increasing code quality and ownership

qbeats increased the level of visibility of bug fixing by sending hourly error reports to developers based on log data parsed in Loggly. The company found these reports to be a very effective tool in prioritizing bug fixes and motivating developers to take ownership of their code. And the decrease in error counts was dramatic: They dropped from more than 100 per hour to the point where the team sometimes goes a full week without seeing a single hourly digest.

4) Accelerating problem responses

qbeats uses alerts to find out about problems before customers do and to enable developers to act on them faster. They proactively monitor for critical errors, issues with key pieces of content or publishers, and conditions that they know could have an impact on user satisfaction.

5) Keeping AWS elastic balancing groups healthy

qbeats uses Loggly to manage AWS audit logs that can uncover problems such as AWS connectivity issues or data center downtime. Knowing what’s in these logs at any moment can be the keys to running a healthy cloud application.

Log Management Is Essential in a Virtual Server World

In talking to us about the importance of log management to cloud-based companies, Markov raised a really good point: You can’t count on being able to ssh into a virtual server! “Since virtual machines are being deployed and decommissioned based on demand, by the time you discover a problem, the affected server could have gone away, taking its logs with it,” he says. “How can you understand what happened without aggregating your logs?”

True point. But log aggregation is only the beginning of the value that Loggly delivers to cloud-based companies. Be sure to read this case study to get more detail on the most critical benefit: immediate insights from log data to drive the business. And if you haven’t tried Loggly for yourself, now is the time to sign up for a free trial!

Follow qbeats on twitter: @qbeatsnews

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Hoover J. Beaver