Loggly Q&A: Talking with James Urquhart About Continuous Integration and Deployment, DevOps, and the Role of Log Monitoring

James Urquhart is a product manager for Dell in the Cloud Manager division. He’s been named one of 10 most influential people in cloud computing by MIT Technology Review, and also recognized as one of the top three cloud bloggers by The Next Web. Follow him on Twitter at @jamesurquhart.

Loggly: Tell us a little about Dell Cloud Manager.

James Urquhart:Companies are realizing that they have a multi-cloud problem, so this product is an orchestration environment to manage infrastructure as a service workloads across multiple clouds such as AWS, GCE, Joyent, Azure, Scale Matrix, Cloud Stack, Open Stack and VMWare. The product helps with governance, access control, integration with management tools and automation tools, using an integrated console so you can see across all those clouds.

Loggly: What are the goals for continuous deployment and rapid release in cloud environments?

Urquhart: So much in business today is reacting to disruptive threats – taking advantage of opportunities and defending against threats. And the time period to do this is usually very short. So the industry needed to build software that could support these rapidly changing human processes. For instance, the marketing team needs to release a new promotion or new ways to engage with customers, and they want to do this every few weeks or months not once a year. Take a company like Caterpillar. Its machines don’t change very often, but it also builds service apps for its equipment – and these services that change a lot. Web businesses are constantly looking for new partnerships, and they’re always learning from customers and introducing new features to avoid being disrupted by competitors. All this means that companies are moving toward applications that have frequent iterations so that they can rapidly change and be ready for the unknown.

Loggly: What are some of the challenges in managing a rapid release environment?

Urquhart: Everyone needs to understand the flow of development at any given moment. If you don’t have strong collaboration in the deploy process it can stall progress or you run the risk of making a decision that is counter to assumptions made earlier in the process, while you’re waiting for somebody’s response. And then you might break the system. Another big risk is if you are not prepared to deal with failure. If a server crashes or a bug shows up that didn’t get caught in testing, you’ve got to respond quickly. Companies do this either through rollback or by applying a rapid fix. So the ability to handle an imperfect situation is very important. The third major risk is releasing the right thing in the first place so that you are moving forward and not sideways. If you make a change that nobody wants, people aren’t going to use the software or service as often.

Loggly: What are some other ways to manage risk in release cycles?

Urquhart: Don’t release a big pile of code all at once. If you release one small update, you can fix it if there is a problem or start making adjustments early on by incorporating customer feedback. That helps you ensure you’re in fact building the right app that people want and will use. Some companies like Etsy release two different versions at once across two user groups to see which one is more popular. Properly automating and managing the QA process is also critical. If you can run through the testing cycle enough times to catch anything glaring before the code is released, your team will avoid a lot of problems later on. I advise spending as much time on QA as you do on the build and release processes.

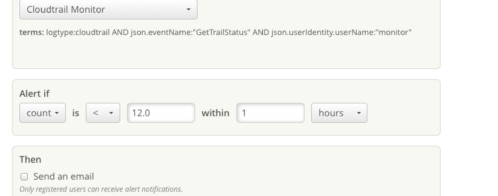

Loggly: What role can log monitoring and analysis (or benchmarking with log analysis) play in mitigating these risks?

Urquhart: Logs are absolutely critical to both measuring the “success” (e.g. performance, availability, usage, etc.) of a service, and to troubleshooting issues when they arise. When it comes to coordinating activity across a distributed system, many techniques have been tried, but the fallback is log aggregation, analysis and monitoring. So, I consider log monitoring, analysis and benchmarking via log analysis a critical tool in the tool chest. It’s not the only tool, by any means, but it is one of the first elements that should be integrated into a continuous integration and deployment environment.

Loggly: How can you work on better collaboration in DevOps?

Urquhart: It involves a lot of tactics. First, developers need to be working with the Ops teams to increase automation, and operations teams need developers to engage in the process. There needs to be a lot of exchange between these individuals around what part of the application deployment process should be automated, how it should be automated, how the code should flow through the deploy and build process, and so on. Companies often patch together various tools to get all of these pieces of automation done but primarily, I see the use of GitHub and Jenkins. Chef, Puppet, Ansible and their ilk are also key components.

Loggly: Can log data play a role in fostering this collaboration?

Urquhart: Log data is one of the key ways that software collaborates with the humans who build and run it. Well-designed logs are powerful records of the history of a software system, and yet they are generally built using easy to share technologies. Typically at least somewhat human readable, log data translates events into a form that can be interpreted by people. But the raw data isn’t good enough. The right analysis tool allows humans to further parse that data, share information with other humans, and visualize what the software is trying to say.

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

James Urquhart