Why It Pays for Elasticsearch Developers to Know About Cluster State

As we have discussed in our previous posts, Elasticsearch forms the underpinning of a lot of Loggly’s functionality. We learned a lot during the process of taking this all-purpose technology, applying it to our log management use cases, and scaling it to serve 5,000+ customers in near real-time. In keeping with our commitment to open source, we wanted to share some of these learnings back to the community. This post talks about a key Elasticsearch concept that has big implications for its behavior at scale: cluster state.

What is Elasticsearch Cluster State?

One of Elasticsearch’s great strengths is that it is schemaless. Starting from scratch, you can add a JSON document with any number of fields to an index without first telling ES anything about what those fields are. It will happily add those fields to the index so that they can later be searched on. These new fields—what they’re named, what type they are, and what index they live in—are automatically added to Elasticsearch’s cluster state. (This assumes you’re using the default dynamic mapping settings; just like almost everything else in Elasticsearch, you can tweak or even disable this behavior.)

There’s one catch, of course: if you try to add a field of a certain type (say, a string), and that field already exists in the target index as a different type (an integer, suppose), it won’t work. Fields can’t have two types simultaneously in the same index! This is a mapping conflict, and how Elasticsearch handles it depends on the types involved. For example:

- Indexing a field as an integer where it already exists as a string will result in silent type coercion

- Trying to index a string into an integer-typed field will result in an exception, and ES will reject the document outright.

So keep an eye on ES’s responses, especially if you’re doing bulk indexing.

Cluster State Is Your Yellow Pages

When you then want to search on those fields, Elasticsearch, just like any inverted index, needs to know what and where they are. A node receiving a query first needs to know where the shards reside for the index you’re querying, and then which fields exist in the index and what type they are. (You can’t do a numeric range search against a string type field, for instance.) So it looks to the cluster state.

As its name suggests, cluster state is global to an Elasticsearch cluster: it contains all the metadata (schema, location, size, etc) for all of the shards in the entire cluster, and is kept current on every node in the cluster.

How does it stay in sync across a cluster that may contain dozens of nodes? Cluster state is maintained by the ES masters, which receive updates from the data nodes as their state changes and in turn broadcast those changes to all of the other nodes in the cluster. Easy, right?

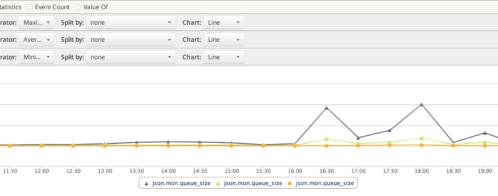

… If Your Yellow Pages Weighed 300 Pounds and Arrived Every 2½ Minutes

But remember: that’s every field in every shard in every index on every node in the cluster. So if you have a lot of fields, as when you’re using ES as a data store for documents with highly variable and dynamic schemata, cluster state can grow quite large. This is particularly true for the Loggly service, since our customers send us logs in arbitrary formats, with any number of unique fields, and in vast quantities. We measure our intake in hundreds of thousands of events per second. And Loggly’s cluster state measures in the hundreds of megabytes.

Hope You’ve Got a Big Porch

Alarms may be ringing for some of you already: “You’re telling me that ES broadcasts several hundred megabytes of data to every single node every time anything changes anywhere?” It’s not quite as bad as it sounds. ES does several things to keep from spending all day on cluster state management:

- Since ES 2.0, cluster state changes have been sent as diffs, which is a huge improvement over the previous releases.

- The cluster state blob is significantly compressed when communicated between ES nodes.

- ES is relatively smart about batching and merging cluster state updates, especially in more recent versions. (See our upcoming post on Pending Tasks if you want to know what could happen in earlier versions when things weren’t so optimized.)

Even so, it behooves you to keep an eye on the size of your cluster state. One of the earliest things we discovered running ES at scale, with massive amounts of highly variable data, is that there is an upper limit to the amount of cluster state that ES can handle before the cluster critically fails. But the gruesome details of that story—and how we conquered our cluster state problem at Loggly—are coming in a future post, so I won’t spoil it for you.

Want to See It?

Here is an example of the actual cluster state for a two node cluster with a single index containing a single document. Ours looks a lot different than this, because we have a lot of machines in our cluster, a lot more indices, and more mappings than you would believe 🙂 But this should give you an idea of what data is actually maintained in the cluster state.

Want to learn more?

9 tips on ElasticSearch configuration for high performance

Learn how to Scale Elasticsearch for Multi-Clusters

The Loggly and SolarWinds trademarks, service marks, and logos are the exclusive property of SolarWinds Worldwide, LLC or its affiliates. All other trademarks are the property of their respective owners.

Hoover J. Beaver